Central processing unit: Difference between revisions

imported>Matt Britt (→See also: rm links that are probably irrelevant here) |

mNo edit summary |

||

| (66 intermediate revisions by 12 users not shown) | |||

| Line 1: | Line 1: | ||

{{subpages}} | |||

A '''central processing unit''' ('''CPU'''), often called the '''processor''', is the component in an electronic [[computer]] that performs all the active processing of its programming directions, and manipulation of data; this includes performing calculations on numbers, and determining which particular steps to perform. | |||

The particular function to be performed at any point in time is based on a [[machine instruction]], also called a [[machine code]], or ''opcode'', which is generally automatically retrieved from the [[memory]]. A sequence of opcodes, executed sequentially, is called a computer [[program]]. | |||

Depending on the exact design of the particular CPU, arithmetic operations may be performed on two numbers that have previously been stored inside the processor in special storage elements called [[register]]s, or one or both may be retrieved from memory. After a calculation is performed, the resulting (newly calculated) number may be retained in a register, or written back to the computer's memory (again, depending on the particular CPU at hand). | |||

[[ | |||

Today's processors also do much more than just perform operations selected by program opcodes; they also perform [[data flow analysis]] on the [[instruction stream]] and apply a number of advanced [[heuristics]] to improved the [[throughput rate]] of [[instructions]]. Some techniques for speeding up processors are discussed in the this article. For more information, see [[computer architecture]]. | |||

A processor that is manufactured as a single [[integrated circuit]] is usually known as a [[microprocessor]]. Most CPUs in modern computers are microprocessors, and microprocessors are also used in many everyday items, from [[automobile]]s and household appliances to cellular phones and children's toys. | |||

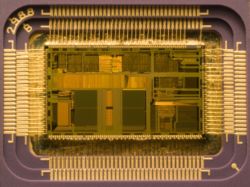

[[Image:80486dx2-large.jpg|right|thumb|250px|Die of an [[Intel 80486DX2]] microprocessor (actual size: 12×6.75 mm) in its packaging]] | |||

==History== | |||

The phrase "central processing unit" and its abbreviation, CPU, have been used in the computer industry dating back to at least the 1960s {{Ref harvard|weik1961|Weik 1961|a}}. The term is a description of a certain class of logic machines that can execute [[computer program|instruction]]s. This broad definition can easily be applied to many early computers that existed long before the term "CPU" ever came into widespread usage. The form, design and implementation of CPUs have changed dramatically since the earliest examples.<ref>Early CPUs were custom-designed for each new kind of computer. Custom processors gave way to mass-produced, general-purposes processors. This trend accelerated with the advent of the [[integrated circuit]] (IC), which allowed processors to become tiny (on the order of [[millimeter]]s). Miniaturization and standardization led to additional uses, such as in [[personal digital assistant]]s (PDA's).</ref> For more information, please see the [[history of processors]] article. | |||

The | |||

==CPU operation== | ==CPU operation== | ||

The | The basic operation of most CPUs, regardless of the physical form they take, is to execute a sequence of stored instructions called a program. The program is represented by a series of numbers that are stored in some kind of [[Memory (computers)|memory]]. There are four steps that many CPUs use in interacting with this data: '''fetch''', '''decode''', '''execute''', and '''writeback'''. CPUs that follow this design of storing and calling up data are following the [[von Neumann architecture]], commonly used in CPUs today. | ||

[[ | |||

The first step, '''fetch''', involves retrieving an [[instruction (computer science)|instruction]] | ===Fetch=== | ||

The first step, '''fetch''', involves retrieving an [[instruction (computer science)|instruction]] from memory. The location in program memory is determined by a [[program counter]], which is a [[register]] that first determines the sequence of instructions to be executed, then stores those instructions by that sequence in memory. In other words, the program counter keeps track of the CPU's place in the current program. After an instruction is fetched, the program counter is incremented by the length of the instruction word in terms of memory units. Often the instruction to be fetched must be retrieved from relatively slow memory, causing the CPU to stall while waiting for the instruction to be returned. This is known as a [[bottleneck]]. | |||

The instruction that the CPU fetches from memory is used to determine what the CPU is to do. In the '''decode''' step, the instruction is broken up into parts that | ===Decode=== | ||

The instruction that the CPU fetches from memory is used to determine what the CPU is to do. In the '''decode''' step, the instruction is broken up into parts that different portions of the CPU can interpret. The way in which the numerical instruction value is interpreted is defined by the CPU's [[instruction set architecture]] ('''ISA'''). Often, one group of bits in the instruction, called the [[opcode]], indicates which operation to perform. The remaining parts of the number usually provide information required for that instruction, such as [[operand]]s for an [[addition]] operation. Such operands may be given as a constant value (called an immediate value), or as a reference to the value's location: a [[processor register|register]] or a [[memory address]]. | |||

[[ | In older designs the portions of the CPU responsible for instruction decoding were fixed hardware devices. However, in more abstract and complicated CPUs and ISAs, a [[microprogram]] is often used to assist in translating instructions into various configuration signals for the CPU. This microprogram is sometimes rewritable so that it can be modified to change the way the CPU decodes instructions even after it has been manufactured. | ||

===Execute=== | |||

After the fetch and decode steps, the '''execute''' step is performed. During this step, various portions of the CPU are connected so they can perform the desired operation. If, for instance, an addition operation was requested, an [[arithmetic logic unit]] ('''ALU''') will be connected to a set of inputs and a set of outputs. The inputs provide the numbers to be added, and the outputs will contain the final sum. The ALU contains the circuitry to perform simple arithmetic and logical operations on the inputs (like addition and [[bitwise operation]]s). If the addition operation produces a result too large for the CPU to handle, an [[arithmetic overflow]] flag in a flags register may also be set (see the discussion of integer range below). | After the fetch and decode steps, the '''execute''' step is performed. During this step, various portions of the CPU are connected so they can perform the desired operation. If, for instance, an addition operation was requested, an [[arithmetic logic unit]] ('''ALU''') will be connected to a set of inputs and a set of outputs. The inputs provide the numbers to be added, and the outputs will contain the final sum. The ALU contains the circuitry to perform simple arithmetic and logical operations on the inputs (like addition and [[bitwise operation]]s). If the addition operation produces a result too large for the CPU to handle, an [[arithmetic overflow]] flag in a flags register may also be set (see the discussion of integer range below). | ||

The final step, '''writeback''', simply "writes back" the results of the execute step to some form of memory. | ===Writeback=== | ||

The final step, '''writeback''', simply "writes back" the results of the execute step to some form of memory. Very often the results are written to some internal CPU register for quick access by subsequent instructions. In other cases results may be written to slower, but cheaper and larger, [[Random access memory|main memory]]. Some types of instructions manipulate the program counter rather than directly produce result data. These are generally called "jumps" and facilitate behavior like [[Control flow#Loops|loops]], conditional program execution (through the use of a conditional jump), and [[Subroutine|functions]] in programs.<ref>Some early computers like the [[History_of_computing#Harvard_Mark_I_.281943.29|Harvard Mark I]] did not support any kind of "jump" instruction, effectively limiting the complexity of the programs they could run. It is largely for this reason that these computers are often not considered to contain a CPU proper, despite their close similarity as stored program computers.</ref> Many instructions will also change the state of digits in a "flags" register. These flags can be used to influence how a program behaves, since they often indicate the outcome of various operations. For example, one type of "compare" instruction considers two values and sets a number in the flags register according to which one is greater. This flag could then be used by a later jump instruction to determine program flow. | |||

After the execution of the instruction and writeback of the resulting data, the entire process repeats, with the next instruction cycle normally fetching the next-in-sequence instruction because of the incremented value in the program counter. | After the execution of the instruction and writeback of the resulting data, the entire process repeats, with the next instruction cycle normally fetching the next-in-sequence instruction because of the incremented value in the program counter. If the completed instruction was a jump, the program counter will be modified to contain the address of the instruction that was jumped to, and program execution continues normally. In more complex CPUs than the one described here, multiple instructions can be fetched, decoded, and executed simultaneously. This section describes a simplified form of what is generally referred to as the "[[Classic RISC pipeline]]," which in fact is quite common among the simple CPUs used in many electronic devices (often called [[microcontroller]]s). | ||

==Design and implementation== | ==Design and implementation== | ||

===Integer range=== | ===Integer range=== | ||

The way a CPU represents numbers is a design choice that affects the most basic ways in which the device functions. Some early digital computers used an electrical model of the common [[decimal]] (base ten) [[numeral system]] to represent numbers internally. A few other computers have used more exotic numeral systems like [[ | The way a CPU represents numbers is a design choice that affects the most basic ways in which the device functions. Some early digital computers used an electrical model of the common [[decimal]] (base ten) [[numeral system]] to represent numbers internally. A few other computers have used more exotic numeral systems like [[balanced ternary|ternary]] (base three). Nearly all modern CPUs represent numbers in [[binary numeral system|binary]] form, with each digit being represented by some two-valued physical quantity such as a "high" or "low" [[volt]]age.<ref>The physical concept of [[voltage]] is an analog one by its nature, practically having an infinite range of possible values. For the purpose of physical representation of binary numbers, set ranges of voltages are defined as one or zero. These ranges are usually influenced by the operational parameters of the switching elements used to create the CPU, such as a [[Electronic switch#Transistor|transistor's]] threshold level.</ref> | ||

Related to number representation is the size and precision of numbers that a CPU can represent. In the case of a binary CPU, a '''bit''' refers to one significant place in the numbers a CPU deals with. The number of bits (or | Related to number representation is the size and precision of numbers that a CPU can represent. In the case of a binary CPU, a '''bit''' refers to one significant place in the numbers a CPU deals with. The number of bits (or ''places'') a CPU uses to represent numbers is often called "[[Word (computer science)|word size]]", "bit width", "data path width", or "integer precision" when dealing with strictly integer numbers (as opposed to floating point). This number differs between architectures, and often within different parts of the very same CPU. For example, an [[8-bit]] CPU deals with a range of numbers that can be represented by eight binary digits (each digit having two possible values), that is, 2<sup>8</sup> or 256 discrete numbers. In effect, integer size sets a hardware limit on the range of integers the software run by the CPU can utilize.<ref>While a processor's integer size determines maximum integer ranges, this can (and often is) overcome using a combination of software and hardware techniques. By using additional memory, software can represent integers many magnitudes larger than the processor can. Sometimes the processor's [[ISA]] will even facilitate operations on integers larger that it can natively represent by providing instructions to make large integer arithmetic relatively quick. While dealing with large integers in software is somewhat slower than utilizing a wider integer size in the processor hardware, it is a reasonable trade-off where wider integers in hardware would be cost-prohibitive. See [[Arbitrary-precision arithmetic]] for more on purely software-supported, arbitrary-sized integers.</ref> | ||

Integer range can also affect the number of locations in memory the CPU can '''address''' (locate). For example, if a binary CPU uses 32 bits to represent a memory address, and each memory address represents one [[octet (computing)|octet]] (8 bits), the maximum quantity of memory that CPU can address is 2<sup>32</sup> octets, or 4 [[GiB]]. This is a very simple view of CPU [[address space]], and many designs use more complex addressing methods like [[paging]] in order to locate more memory than their integer range would allow with a flat address space. | Integer range can also affect the number of locations in memory the CPU can '''address''' (locate). For example, if a binary CPU uses 32 bits to represent a memory address, and each memory address represents one [[octet (computing)|octet]] (8 bits), the maximum quantity of memory that CPU can address is 2<sup>32</sup> octets, or 4 [[GiB]]. This is a very simple view of CPU [[address space]], and many designs use more complex addressing methods like [[paging]] in order to locate more memory than their integer range would allow with a flat address space. | ||

| Line 71: | Line 46: | ||

===Clock rate=== | ===Clock rate=== | ||

Most processors, and indeed most [[sequential logic]] devices, operate ''synchronously''.<ref>In fact, processors include a combination of [[sequential logic]] and [[combinatorial logic]]. (See [[boolean logic]])</ref> Processors "make a move" each time a synchronization signal occurs. This signal, known as a '''clock signal''', usually takes the form of a periodic [[square wave]]. By calculating the maximum time that electrical signals can propagate throughout various branches of a processor's many circuits, designers can select an appropriate [[Frequency|period]] for the clock signal. | |||

Most | |||

This period must be longer than the amount of time it takes for a signal to move, or propagate, in the worst-case scenario. In setting the clock period to a value well above the worst-case propagation delay, it is possible to design the entire CPU and the way it moves data around the "edges" of the rising and falling clock signal. This has the advantage of simplifying the CPU significantly, both from a design perspective and a component-count perspective. However, it also carries the disadvantage that the entire CPU must wait on its slowest elements, even though some portions of it are much faster. This limitation has largely been compensated for by various methods of increasing CPU parallelism (see below). | This period must be longer than the amount of time it takes for a signal to move, or propagate, in the worst-case scenario. In setting the clock period to a value well above the worst-case propagation delay, it is possible to design the entire CPU and the way it moves data around the "edges" of the rising and falling clock signal. This has the advantage of simplifying the CPU significantly, both from a design perspective and a component-count perspective. However, it also carries the disadvantage that the entire CPU must wait on its slowest elements, even though some portions of it are much faster. This limitation has largely been compensated for by various methods of increasing CPU parallelism (see below). | ||

| Line 83: | Line 55: | ||

===Parallelism=== | ===Parallelism=== | ||

The description of the basic operation of a CPU offered in the previous section describes the simplest form that a CPU can take. This type of CPU, usually referred to as '''subscalar''', operates on and executes one instruction on one or two pieces of data at a time. | The description of the basic operation of a CPU offered in the previous section describes the simplest form that a CPU can take. This type of CPU, usually referred to as '''subscalar''', operates on and executes one instruction on one or two pieces of data at a time. | ||

| Line 92: | Line 61: | ||

Attempts to achieve scalar and better performance have resulted in a variety of design methodologies that cause the CPU to behave less linearly and more in parallel. When referring to parallelism in CPUs, two terms are generally used to classify these design techniques. [[Instruction level parallelism]] (ILP) seeks to increase the rate at which instructions are executed within a CPU (that is, to increase the utilization of on-die execution resources), and [[thread level parallelism]] (TLP) purposes to increase the number of [[Thread (computer science)|threads]] (effectively individual programs) that a CPU can execute simultaneously. Each methodology differs both in the ways in which they are implemented, as well as the relative effectiveness they afford in increasing the CPU's performance for an application.<ref>Neither [[Instruction level parallelism|ILP]] nor [[Thread level parallelism|TLP]] is inherently superior over the other; they are simply different means by which to increase CPU parallelism. As such, they both have advantages and disadvantages, which are often determined by the type of software that the processor is intended to run. High-TLP CPUs are often used in applications that lend themselves well to being split up into numerous smaller applications, so-called "[[embarrassingly parallel]] problems." Frequently, a computational problem that can be solved quickly with high TLP design strategies like SMP take significantly more time on high ILP devices like superscalar CPUs, and vice versa.</ref> | Attempts to achieve scalar and better performance have resulted in a variety of design methodologies that cause the CPU to behave less linearly and more in parallel. When referring to parallelism in CPUs, two terms are generally used to classify these design techniques. [[Instruction level parallelism]] (ILP) seeks to increase the rate at which instructions are executed within a CPU (that is, to increase the utilization of on-die execution resources), and [[thread level parallelism]] (TLP) purposes to increase the number of [[Thread (computer science)|threads]] (effectively individual programs) that a CPU can execute simultaneously. Each methodology differs both in the ways in which they are implemented, as well as the relative effectiveness they afford in increasing the CPU's performance for an application.<ref>Neither [[Instruction level parallelism|ILP]] nor [[Thread level parallelism|TLP]] is inherently superior over the other; they are simply different means by which to increase CPU parallelism. As such, they both have advantages and disadvantages, which are often determined by the type of software that the processor is intended to run. High-TLP CPUs are often used in applications that lend themselves well to being split up into numerous smaller applications, so-called "[[embarrassingly parallel]] problems." Frequently, a computational problem that can be solved quickly with high TLP design strategies like SMP take significantly more time on high ILP devices like superscalar CPUs, and vice versa.</ref> | ||

==== | ====Instruction level parallelism==== | ||

One of the simplest methods used to accomplish increased parallelism is to begin the first steps of instruction fetching and decoding before the prior instruction finishes executing. This is the simplest form of a technique known as ''[[instruction pipelining]]'', and is utilized in almost all modern general-purpose CPUs. Pipelining allows more than one instruction to be executed at any given time by breaking down the execution pathway into discrete stages. This separation can be compared to an assembly line, in which an instruction is made more complete at each stage until it exits the execution pipeline and is retired. | |||

One of the simplest methods used to accomplish increased parallelism is to begin the first steps of instruction fetching and decoding before the prior instruction finishes executing. This is the simplest form of a technique known as | |||

Pipelining does, however, introduce the possibility for a situation where the result of the previous operation is needed to complete the next operation; a condition often termed data dependency conflict. To cope with this, additional care must be taken to check for these sorts of conditions and delay a portion of the instruction pipeline if this occurs. Naturally, accomplishing this requires additional circuitry, so pipelined processors are more complex than subscalar ones (though not very significantly so). A pipelined processor can become very nearly scalar, inhibited only by pipeline stalls (an instruction spending more than one clock cycle in a stage). | Pipelining does, however, introduce the possibility for a situation where the result of the previous operation is needed to complete the next operation; a condition often termed data dependency conflict. To cope with this, additional care must be taken to check for these sorts of conditions and delay a portion of the instruction pipeline if this occurs. Naturally, accomplishing this requires additional circuitry, so pipelined processors are more complex than subscalar ones (though not very significantly so). A pipelined processor can become very nearly scalar, inhibited only by pipeline stalls (an instruction spending more than one clock cycle in a stage). | ||

Further improvement upon the idea of instruction pipelining led to the development of a method that decreases the idle time of CPU components even further. Designs that are said to be ''[[superscalar]]'' include a long instruction pipeline and multiple identical execution units. In a superscalar pipeline, multiple instructions are read and passed to a dispatcher, which decides whether or not the instructions can be executed in parallel (simultaneously). If so they are dispatched to available execution units, resulting in the ability for several instructions to be executed simultaneously. In general, the more instructions a superscalar CPU is able to dispatch simultaneously to waiting execution units, the more instructions will be completed in a given cycle. | |||

Further improvement upon the idea of instruction pipelining led to the development of a method that decreases the idle time of CPU components even further. Designs that are said to be '' | |||

Most of the difficulty in the design of a superscalar CPU architecture lies in creating an effective dispatcher. The dispatcher needs to be able to quickly and correctly determine whether instructions can be executed in parallel, as well as dispatch them in such a way as to keep as many execution units busy as possible. This requires that the instruction pipeline is filled as often as possible and gives rise to the need in superscalar architectures for significant amounts of [[CPU cache]]. It also makes [[Hazard (computer architecture)|hazard]]-avoiding techniques like [[branch prediction]], [[speculative execution]], and [[out-of-order execution]] crucial to maintaining high levels of performance. By attempting to predict which branch (or path) a conditional instruction will take, the CPU can minimize the number of times that the entire pipeline must wait until a conditional instruction is completed. Speculative execution often provides modest performance increases by executing portions of code that may or may not be needed after a conditional operation completes. Out-of-order execution somewhat rearranges the order in which instructions are executed to reduce delays due to data dependencies. | Most of the difficulty in the design of a superscalar CPU architecture lies in creating an effective dispatcher. The dispatcher needs to be able to quickly and correctly determine whether instructions can be executed in parallel, as well as dispatch them in such a way as to keep as many execution units busy as possible. This requires that the instruction pipeline is filled as often as possible and gives rise to the need in superscalar architectures for significant amounts of [[CPU cache]]. It also makes [[Hazard (computer architecture)|hazard]]-avoiding techniques like [[branch prediction]], [[speculative execution]], and [[out-of-order execution]] crucial to maintaining high levels of performance. By attempting to predict which branch (or path) a conditional instruction will take, the CPU can minimize the number of times that the entire pipeline must wait until a conditional instruction is completed. Speculative execution often provides modest performance increases by executing portions of code that may or may not be needed after a conditional operation completes. Out-of-order execution somewhat rearranges the order in which instructions are executed to reduce delays due to data dependencies. | ||

| Line 110: | Line 74: | ||

Both simple pipelining and superscalar design increase a CPU's ILP by allowing a single processor to complete execution of instructions at rates surpassing one instruction per cycle ('''IPC''').<ref>Best-case scenario (or peak) IPC rates in very superscalar architectures are difficult to maintain since it is impossible to keep the instruction pipeline filled all the time. Therefore, in highly superscalar CPUs, average sustained IPC is often discussed rather than peak IPC.</ref> Most modern CPU designs are at least somewhat superscalar, and nearly all general purpose CPUs designed in the last decade are superscalar. In later years some of the emphasis in designing high-ILP computers has been moved out of the CPU's hardware and into its software interface, or ISA. The strategy of the [[very long instruction word]] (VLIW) causes some ILP to become implied directly by the software, reducing the amount of work the CPU must perform to boost ILP and thereby reducing the design's complexity. | Both simple pipelining and superscalar design increase a CPU's ILP by allowing a single processor to complete execution of instructions at rates surpassing one instruction per cycle ('''IPC''').<ref>Best-case scenario (or peak) IPC rates in very superscalar architectures are difficult to maintain since it is impossible to keep the instruction pipeline filled all the time. Therefore, in highly superscalar CPUs, average sustained IPC is often discussed rather than peak IPC.</ref> Most modern CPU designs are at least somewhat superscalar, and nearly all general purpose CPUs designed in the last decade are superscalar. In later years some of the emphasis in designing high-ILP computers has been moved out of the CPU's hardware and into its software interface, or ISA. The strategy of the [[very long instruction word]] (VLIW) causes some ILP to become implied directly by the software, reducing the amount of work the CPU must perform to boost ILP and thereby reducing the design's complexity. | ||

==== | ====Thread level parallelism==== | ||

Another strategy commonly used to increase the parallelism of CPUs is to include the ability to run multiple [[thread (computer science)|threads]] (programs) at the same time. In general, high-TLP CPUs have been in use much longer than high-ILP ones. Many of the designs pioneered by [[Cray]] during the late 1970s and 1980s concentrated on TLP as their primary method of enabling enormous (for the time) computing capability. In fact, TLP in the form of multiple thread execution improvements was in use as early as the 1950s {{Ref harvard|Smotherman2005|Smotherman 2005|a}}. In the context of single processor design, the two main methodologies used to accomplish TLP are [[chip-level multiprocessing]] (CMP) and [[simultaneous multithreading]] (SMT). On a higher level, it is very common to build computers with multiple totally independent CPUs in arrangements like [[symmetric multiprocessing]] (SMP) and [[non-uniform memory access]] (NUMA).<ref>Even though SMP and NUMA are both referred to as "systems level" TLP strategies, both methods must still be supported by the CPU's design and implementation.</ref> While using very different means, all of these techniques accomplish the same goal: increasing the number of threads that the CPU(s) can run in parallel. | Another strategy commonly used to increase the parallelism of CPUs is to include the ability to run multiple [[thread (computer science)|threads]] (programs) at the same time. In general, high-TLP CPUs have been in use much longer than high-ILP ones. Many of the designs pioneered by [[Cray]] during the late 1970s and 1980s concentrated on TLP as their primary method of enabling enormous (for the time) computing capability. In fact, TLP in the form of multiple thread execution improvements was in use as early as the 1950s {{Ref harvard|Smotherman2005|Smotherman 2005|a}}. In the context of single processor design, the two main methodologies used to accomplish TLP are [[chip-level multiprocessing]] (CMP) and [[simultaneous multithreading]] (SMT). On a higher level, it is very common to build computers with multiple totally independent CPUs in arrangements like [[symmetric multiprocessing]] (SMP) and [[non-uniform memory access]] (NUMA).<ref>Even though SMP and NUMA are both referred to as "systems level" TLP strategies, both methods must still be supported by the CPU's design and implementation.</ref> While using very different means, all of these techniques accomplish the same goal: increasing the number of threads that the CPU(s) can run in parallel. | ||

The CMP and SMP methods of parallelism are similar to one another and the most straightforward. These involve little more conceptually than the utilization of two or more complete and independent CPUs. In the case of CMP, multiple processor "cores" are included in the same package, sometimes on the very same [[integrated circuit]].<ref>While TLP methods have generally been in use longer than ILP methods, Chip-level multiprocessing is more or less only seen in later [[Integrated circuit|IC]]-based microprocessors. This is largely because the term itself is inapplicable to earlier discrete component devices and has only come into use recently.<br/>For several years during the late 1990s and early 2000s, the focus in designing high performance general purpose CPUs was largely on highly superscalar IPC designs, such as the Intel [[Pentium 4]]. However, this trend seems to be reversing somewhat now as major general-purpose CPU designers switch back to less deeply pipelined high-TLP designs. This is evidenced by the proliferation of dual and multi core CMP designs and notably, Intel's newer designs resembling its less superscalar [[P6]] architecture. Late designs in several processor families exhibit CMP, including the [[x86-64]] [[Opteron]] and [[Athlon 64 X2]], the [[SPARC]] [[UltraSPARC T1]], IBM [[POWER4]] and [[POWER5]], as well as several [[video game console]] CPUs like the [[Xbox 360]]'s triple-core PowerPC design.</ref> SMP, on the other hand, includes multiple independent packages. NUMA is somewhat similar to SMP but uses a nonuniform memory access model. This is important for computers with many CPUs because each processor's access time to memory is quickly exhausted with SMP's shared memory model, resulting in significant delays due to CPUs waiting for memory. Therefore, NUMA is considered a much more scalable model, successfully allowing many more CPUs to be used in one computer than SMP can feasibly support. SMT differs somewhat from other TLP improvements in that it attempts to duplicate as few portions of the CPU as possible. While considered a TLP strategy, its implementation actually more resembles superscalar design, and indeed is often used in superscalar microprocessors (such as IBM's [[POWER5]]). Rather than duplicating the entire CPU, SMT designs only duplicate parts needed for instruction fetching, decoding, and dispatch, as well as things like general-purpose registers. This allows an SMT CPU to keep its execution units busy more often by providing them instructions from two different software threads. Again, this is very similar to the ILP superscalar method, but simultaneously executes instructions from ''multiple threads'' rather than executing multiple instructions from the ''same thread'' concurrently. | The CMP and SMP methods of parallelism are similar to one another and the most straightforward. These involve little more conceptually than the utilization of two or more complete and independent CPUs. In the case of CMP, multiple processor "cores" are included in the same package, sometimes on the very same [[integrated circuit]].<ref>While TLP methods have generally been in use longer than ILP methods, Chip-level multiprocessing is more or less only seen in later [[Integrated circuit|IC]]-based microprocessors. This is largely because the term itself is inapplicable to earlier discrete component devices and has only come into use recently.<br/>For several years during the late 1990s and early 2000s, the focus in designing high performance general purpose CPUs was largely on highly superscalar IPC designs, such as the Intel [[Pentium 4]]. However, this trend seems to be reversing somewhat now as major general-purpose CPU designers switch back to less deeply pipelined high-TLP designs. This is evidenced by the proliferation of dual and multi core CMP designs and notably, Intel's newer designs resembling its less superscalar [[P6]] architecture. Late designs in several processor families exhibit CMP, including the [[x86-64]] [[Opteron]] and [[Athlon 64 X2]], the [[SPARC]] [[UltraSPARC T1]], IBM [[POWER4]] and [[POWER5]], as well as several [[video game console]] CPUs like the [[Xbox 360]]'s triple-core PowerPC design.</ref> SMP, on the other hand, includes multiple independent packages. NUMA is somewhat similar to SMP but uses a nonuniform memory access model. This is important for computers with many CPUs because each processor's access time to memory is quickly exhausted with SMP's shared memory model, resulting in significant delays due to CPUs waiting for memory. Therefore, NUMA is considered a much more scalable model, successfully allowing many more CPUs to be used in one computer than SMP can feasibly support. SMT differs somewhat from other TLP improvements in that it attempts to duplicate as few portions of the CPU as possible. While considered a TLP strategy, its implementation actually more resembles superscalar design, and indeed is often used in superscalar microprocessors (such as IBM's [[POWER5]]). Rather than duplicating the entire CPU, SMT designs only duplicate parts needed for instruction fetching, decoding, and dispatch, as well as things like general-purpose registers. This allows an SMT CPU to keep its execution units busy more often by providing them instructions from two different software threads. Again, this is very similar to the ILP superscalar method, but simultaneously executes instructions from ''multiple threads'' rather than executing multiple instructions from the ''same thread'' concurrently. | ||

=== | ====Data parallelism==== | ||

A less common but increasingly important paradigm of CPUs (and indeed, computing in general) deals with vectors. The processors discussed earlier are all referred to as some type of scalar device.<ref>Earlier the term scalar was used to compare the IPC (instructions per cycle) count afforded by various ILP methods. Here the term is used in the strictly mathematical sense to contrast with vectors. See [[scalar (mathematics)]] and [[vector (spatial)]].</ref> As the name implies, [[vector processor]]s deal with multiple pieces of data in the context of one instruction. This contrasts with scalar processors, which deal with one piece of data for every instruction. These two schemes of dealing with data are generally referred to as [[SISD]] (single instruction, single data) and [[SIMD]] (single instruction, multiple data), respectively. The great utility in creating CPUs that deal with vectors of data lies in optimizing tasks that tend to require the same operation (for example, a sum or a [[dot product]]) to be performed on a large set of data. Some classic examples of these types of tasks are [[multimedia]] applications (images, video, and sound), as well as many types of [[Scientific computing|scientific]] and engineering tasks. Whereas a scalar CPU must complete the entire process of fetching, decoding, and executing each instruction and value in a set of data, a vector CPU can perform a single operation on a comparatively large set of data with one instruction. Of course, this is only possible when the application tends to require many steps which apply one operation to a large set of data. | |||

A less common but increasingly important paradigm of CPUs (and indeed, computing in general) deals with vectors. The processors discussed earlier are all referred to as some type of scalar device.<ref>Earlier the term scalar was used to compare the IPC (instructions per cycle) count afforded by various ILP methods. Here the term is used in the strictly mathematical sense to contrast with vectors. See [[scalar (mathematics)]] and [[vector (spatial)]].</ref> As the name implies, vector | |||

Most early vector CPUs, such as the [[Cray-1]], were associated almost exclusively with scientific research and [[cryptography]] applications. However, as multimedia has largely shifted to digital media, the need for some form of SIMD in general-purpose CPUs has become significant. Shortly after [[Floating point unit|floating point execution units]] started to become commonplace to include in general-purpose processors, specifications for and implementations of SIMD execution units also began to appear for general-purpose CPUs. Some of these early SIMD specifications like Intel's [[MMX]] were integer-only. This proved to be a significant impediment for some software developers, since many of the applications that benefit from SIMD primarily deal with [[floating point]] numbers. Progressively, these early designs were refined and remade into some of the common, modern SIMD specifications, which are usually associated with one ISA. Some notable modern examples are Intel's [[Streaming SIMD Extensions|SSE]] and the PowerPC-related [[AltiVec]] (also known as VMX).<ref>Although SSE/SSE2/SSE3 have superseded MMX in Intel's general purpose CPUs, later [[IA-32]] designs still support MMX. This is usually accomplished by providing most of the MMX functionality with the same hardware that supports the much more expansive SSE instruction sets.</ref> | Most early vector CPUs, such as the [[Cray-1]], were associated almost exclusively with scientific research and [[cryptography]] applications. However, as multimedia has largely shifted to digital media, the need for some form of SIMD in general-purpose CPUs has become significant. Shortly after [[Floating point unit|floating point execution units]] started to become commonplace to include in general-purpose processors, specifications for and implementations of SIMD execution units also began to appear for general-purpose CPUs. Some of these early SIMD specifications like Intel's [[MMX]] were integer-only. This proved to be a significant impediment for some software developers, since many of the applications that benefit from SIMD primarily deal with [[floating point]] numbers. Progressively, these early designs were refined and remade into some of the common, modern SIMD specifications, which are usually associated with one ISA. Some notable modern examples are Intel's [[Streaming SIMD Extensions|SSE]] and the PowerPC-related [[AltiVec]] (also known as VMX).<ref>Although SSE/SSE2/SSE3 have superseded MMX in Intel's general purpose CPUs, later [[IA-32]] designs still support MMX. This is usually accomplished by providing most of the MMX functionality with the same hardware that supports the much more expansive SSE instruction sets.</ref> | ||

==See also== | ==See also== | ||

* [[ | * [[Computer architecture]] | ||

* [[Computer engineering]] | * [[Computer engineering]] | ||

==References== | ==References== | ||

| Line 208: | Line 163: | ||

==External links== | ==External links== | ||

;Microprocessor designers | ;Microprocessor designers | ||

*[http://www.amd.com/ Advanced Micro Devices] - [[Advanced Micro Devices]], a designer of primarily [[x86]]-compatible personal computer CPUs. | *[http://www.amd.com/ Advanced Micro Devices] - [[Advanced Micro Devices]], a designer of primarily [[x86]]-compatible personal computer CPUs. | ||

| Line 223: | Line 177: | ||

*[http://www.gamezero.com/team-0/articles/math_magic/micro/index.html Processor Design: An Introduction] - Detailed introduction to microprocessor design. Somewhat incomplete and outdated, but still worthwhile. | *[http://www.gamezero.com/team-0/articles/math_magic/micro/index.html Processor Design: An Introduction] - Detailed introduction to microprocessor design. Somewhat incomplete and outdated, but still worthwhile. | ||

*[http://computer.howstuffworks.com/microprocessor.htm How Microprocessors Work] | *[http://computer.howstuffworks.com/microprocessor.htm How Microprocessors Work] | ||

*[http://arstechnica.com/articles/paedia/cpu/pipelining-2.ars/2 Pipelining: An Overview] - Good introduction to and overview of CPU pipelining techniques by the staff of [ | *[http://arstechnica.com/articles/paedia/cpu/pipelining-2.ars/2 Pipelining: An Overview] - Good introduction to and overview of CPU pipelining techniques by the staff of [http://arstechnica.com/index.ars Ars Technica] | ||

*[http://arstechnica.com/articles/paedia/cpu/simd.ars/ SIMD Architectures] - Introduction to and explanation of SIMD, especially how it relates to personal computers. Also by | *[http://arstechnica.com/articles/paedia/cpu/simd.ars/ SIMD Architectures] - Introduction to and explanation of SIMD, especially how it relates to personal computers. Also by Ars Technica | ||

[[Category: | ==Notes== | ||

<references />[[Category:Suggestion Bot Tag]] | |||

Latest revision as of 11:00, 26 July 2024

A central processing unit (CPU), often called the processor, is the component in an electronic computer that performs all the active processing of its programming directions, and manipulation of data; this includes performing calculations on numbers, and determining which particular steps to perform.

The particular function to be performed at any point in time is based on a machine instruction, also called a machine code, or opcode, which is generally automatically retrieved from the memory. A sequence of opcodes, executed sequentially, is called a computer program.

Depending on the exact design of the particular CPU, arithmetic operations may be performed on two numbers that have previously been stored inside the processor in special storage elements called registers, or one or both may be retrieved from memory. After a calculation is performed, the resulting (newly calculated) number may be retained in a register, or written back to the computer's memory (again, depending on the particular CPU at hand).

Today's processors also do much more than just perform operations selected by program opcodes; they also perform data flow analysis on the instruction stream and apply a number of advanced heuristics to improved the throughput rate of instructions. Some techniques for speeding up processors are discussed in the this article. For more information, see computer architecture.

A processor that is manufactured as a single integrated circuit is usually known as a microprocessor. Most CPUs in modern computers are microprocessors, and microprocessors are also used in many everyday items, from automobiles and household appliances to cellular phones and children's toys.

History

The phrase "central processing unit" and its abbreviation, CPU, have been used in the computer industry dating back to at least the 1960s (Weik 1961). The term is a description of a certain class of logic machines that can execute instructions. This broad definition can easily be applied to many early computers that existed long before the term "CPU" ever came into widespread usage. The form, design and implementation of CPUs have changed dramatically since the earliest examples.[1] For more information, please see the history of processors article.

CPU operation

The basic operation of most CPUs, regardless of the physical form they take, is to execute a sequence of stored instructions called a program. The program is represented by a series of numbers that are stored in some kind of memory. There are four steps that many CPUs use in interacting with this data: fetch, decode, execute, and writeback. CPUs that follow this design of storing and calling up data are following the von Neumann architecture, commonly used in CPUs today.

Fetch

The first step, fetch, involves retrieving an instruction from memory. The location in program memory is determined by a program counter, which is a register that first determines the sequence of instructions to be executed, then stores those instructions by that sequence in memory. In other words, the program counter keeps track of the CPU's place in the current program. After an instruction is fetched, the program counter is incremented by the length of the instruction word in terms of memory units. Often the instruction to be fetched must be retrieved from relatively slow memory, causing the CPU to stall while waiting for the instruction to be returned. This is known as a bottleneck.

Decode

The instruction that the CPU fetches from memory is used to determine what the CPU is to do. In the decode step, the instruction is broken up into parts that different portions of the CPU can interpret. The way in which the numerical instruction value is interpreted is defined by the CPU's instruction set architecture (ISA). Often, one group of bits in the instruction, called the opcode, indicates which operation to perform. The remaining parts of the number usually provide information required for that instruction, such as operands for an addition operation. Such operands may be given as a constant value (called an immediate value), or as a reference to the value's location: a register or a memory address.

In older designs the portions of the CPU responsible for instruction decoding were fixed hardware devices. However, in more abstract and complicated CPUs and ISAs, a microprogram is often used to assist in translating instructions into various configuration signals for the CPU. This microprogram is sometimes rewritable so that it can be modified to change the way the CPU decodes instructions even after it has been manufactured.

Execute

After the fetch and decode steps, the execute step is performed. During this step, various portions of the CPU are connected so they can perform the desired operation. If, for instance, an addition operation was requested, an arithmetic logic unit (ALU) will be connected to a set of inputs and a set of outputs. The inputs provide the numbers to be added, and the outputs will contain the final sum. The ALU contains the circuitry to perform simple arithmetic and logical operations on the inputs (like addition and bitwise operations). If the addition operation produces a result too large for the CPU to handle, an arithmetic overflow flag in a flags register may also be set (see the discussion of integer range below).

Writeback

The final step, writeback, simply "writes back" the results of the execute step to some form of memory. Very often the results are written to some internal CPU register for quick access by subsequent instructions. In other cases results may be written to slower, but cheaper and larger, main memory. Some types of instructions manipulate the program counter rather than directly produce result data. These are generally called "jumps" and facilitate behavior like loops, conditional program execution (through the use of a conditional jump), and functions in programs.[2] Many instructions will also change the state of digits in a "flags" register. These flags can be used to influence how a program behaves, since they often indicate the outcome of various operations. For example, one type of "compare" instruction considers two values and sets a number in the flags register according to which one is greater. This flag could then be used by a later jump instruction to determine program flow.

After the execution of the instruction and writeback of the resulting data, the entire process repeats, with the next instruction cycle normally fetching the next-in-sequence instruction because of the incremented value in the program counter. If the completed instruction was a jump, the program counter will be modified to contain the address of the instruction that was jumped to, and program execution continues normally. In more complex CPUs than the one described here, multiple instructions can be fetched, decoded, and executed simultaneously. This section describes a simplified form of what is generally referred to as the "Classic RISC pipeline," which in fact is quite common among the simple CPUs used in many electronic devices (often called microcontrollers).

Design and implementation

Integer range

The way a CPU represents numbers is a design choice that affects the most basic ways in which the device functions. Some early digital computers used an electrical model of the common decimal (base ten) numeral system to represent numbers internally. A few other computers have used more exotic numeral systems like ternary (base three). Nearly all modern CPUs represent numbers in binary form, with each digit being represented by some two-valued physical quantity such as a "high" or "low" voltage.[3]

Related to number representation is the size and precision of numbers that a CPU can represent. In the case of a binary CPU, a bit refers to one significant place in the numbers a CPU deals with. The number of bits (or places) a CPU uses to represent numbers is often called "word size", "bit width", "data path width", or "integer precision" when dealing with strictly integer numbers (as opposed to floating point). This number differs between architectures, and often within different parts of the very same CPU. For example, an 8-bit CPU deals with a range of numbers that can be represented by eight binary digits (each digit having two possible values), that is, 28 or 256 discrete numbers. In effect, integer size sets a hardware limit on the range of integers the software run by the CPU can utilize.[4]

Integer range can also affect the number of locations in memory the CPU can address (locate). For example, if a binary CPU uses 32 bits to represent a memory address, and each memory address represents one octet (8 bits), the maximum quantity of memory that CPU can address is 232 octets, or 4 GiB. This is a very simple view of CPU address space, and many designs use more complex addressing methods like paging in order to locate more memory than their integer range would allow with a flat address space.

Higher levels of integer range require more structures to deal with the additional digits, and therefore more complexity, size, power usage, and generally expense. It is not at all uncommon, therefore, to see 4- or 8-bit microcontrollers used in modern applications, even though CPUs with much higher range (such as 16, 32, 64, even 128-bit) are available. The simpler microcontrollers are usually cheaper, use less power, and therefore dissipate less heat, all of which can be major design considerations for electronic devices. However, in higher-end applications, the benefits afforded by the extra range (most often the additional address space) are more significant and often affect design choices. To gain some of the advantages afforded by both lower and higher bit lengths, many CPUs are designed with different bit widths for different portions of the device. For example, the IBM System/370 used a CPU that was primarily 32 bit, but it used 128-bit precision inside its floating point units to facilitate greater accuracy and range in floating point numbers (Amdahl et al. 1964). Many later CPU designs use similar mixed bit width, especially when the processor is meant for general-purpose usage where a reasonable balance of integer and floating point capability is required.

Clock rate

Most processors, and indeed most sequential logic devices, operate synchronously.[5] Processors "make a move" each time a synchronization signal occurs. This signal, known as a clock signal, usually takes the form of a periodic square wave. By calculating the maximum time that electrical signals can propagate throughout various branches of a processor's many circuits, designers can select an appropriate period for the clock signal.

This period must be longer than the amount of time it takes for a signal to move, or propagate, in the worst-case scenario. In setting the clock period to a value well above the worst-case propagation delay, it is possible to design the entire CPU and the way it moves data around the "edges" of the rising and falling clock signal. This has the advantage of simplifying the CPU significantly, both from a design perspective and a component-count perspective. However, it also carries the disadvantage that the entire CPU must wait on its slowest elements, even though some portions of it are much faster. This limitation has largely been compensated for by various methods of increasing CPU parallelism (see below).

Architectural improvements alone do not solve all of the drawbacks of globally synchronous CPUs, however. For example, a clock signal is subject to the delays of any other electrical signal. Higher clock rates in increasingly complex CPUs make it more difficult to keep the clock signal in phase (synchronized) throughout the entire unit. This has led many modern CPUs to require multiple identical clock signals to be provided in order to avoid delaying a single signal significantly enough to cause the CPU to malfunction. Another major issue as clock rates increase dramatically is the amount of heat that is dissipated by the CPU. The constantly changing clock causes many components to switch regardless of whether they are being used at that time. In general, a component that is switching uses more energy than an element in a static state. Therefore, as clock rate increases, so does heat dissipation, causing the CPU to require more effective cooling solutions.

One method of dealing with the switching of unneeded components is called clock gating, which involves turning off the clock signal to unneeded components (effectively disabling them). However, this is often regarded as difficult to implement and therefore does not see common usage outside of very low-power designs.[6] Another method of addressing some of the problems with a global clock signal is the removal of the clock signal altogether. While removing the global clock signal makes the design process considerably more complex in many ways, asynchronous (or clockless) designs carry marked advantages in power consumption and heat dissipation in comparison with similar synchronous designs. While somewhat uncommon, entire CPUs have been built without utilizing a global clock signal. Two notable examples of this are the ARM compliant AMULET and the MIPS R3000 compatible MiniMIPS. Rather than totally removing the clock signal, some CPU designs allow certain portions of the device to be asynchronous, such as using asynchronous ALUs in conjunction with superscalar pipelining to achieve some arithmetic performance gains. While it is not altogether clear whether totally asynchronous designs can perform at a comparable or better level than their synchronous counterparts, it is evident that they do at least excel in simpler math operations. This, combined with their excellent power consumption and heat dissipation properties, makes them very suitable for embedded computers (Garside et al. 1999).

Parallelism

The description of the basic operation of a CPU offered in the previous section describes the simplest form that a CPU can take. This type of CPU, usually referred to as subscalar, operates on and executes one instruction on one or two pieces of data at a time.

This process gives rise to an inherent inefficiency in subscalar CPUs. Since only one instruction is executed at a time, the entire CPU must wait for that instruction to complete before proceeding to the next instruction. As a result the subscalar CPU gets "hung up" on instructions which take more than one clock cycle to complete execution. Even adding a second execution unit (see below) does not improve performance much; rather than one pathway being hung up, now two pathways are hung up and the number of unused transistors is increased. This design, wherein the CPU's execution resources can operate on only one instruction at a time, can only possibly reach scalar performance (one instruction per clock). However, the performance is nearly always subscalar (less than one instruction per cycle).

Attempts to achieve scalar and better performance have resulted in a variety of design methodologies that cause the CPU to behave less linearly and more in parallel. When referring to parallelism in CPUs, two terms are generally used to classify these design techniques. Instruction level parallelism (ILP) seeks to increase the rate at which instructions are executed within a CPU (that is, to increase the utilization of on-die execution resources), and thread level parallelism (TLP) purposes to increase the number of threads (effectively individual programs) that a CPU can execute simultaneously. Each methodology differs both in the ways in which they are implemented, as well as the relative effectiveness they afford in increasing the CPU's performance for an application.[7]

Instruction level parallelism

One of the simplest methods used to accomplish increased parallelism is to begin the first steps of instruction fetching and decoding before the prior instruction finishes executing. This is the simplest form of a technique known as instruction pipelining, and is utilized in almost all modern general-purpose CPUs. Pipelining allows more than one instruction to be executed at any given time by breaking down the execution pathway into discrete stages. This separation can be compared to an assembly line, in which an instruction is made more complete at each stage until it exits the execution pipeline and is retired.

Pipelining does, however, introduce the possibility for a situation where the result of the previous operation is needed to complete the next operation; a condition often termed data dependency conflict. To cope with this, additional care must be taken to check for these sorts of conditions and delay a portion of the instruction pipeline if this occurs. Naturally, accomplishing this requires additional circuitry, so pipelined processors are more complex than subscalar ones (though not very significantly so). A pipelined processor can become very nearly scalar, inhibited only by pipeline stalls (an instruction spending more than one clock cycle in a stage).

Further improvement upon the idea of instruction pipelining led to the development of a method that decreases the idle time of CPU components even further. Designs that are said to be superscalar include a long instruction pipeline and multiple identical execution units. In a superscalar pipeline, multiple instructions are read and passed to a dispatcher, which decides whether or not the instructions can be executed in parallel (simultaneously). If so they are dispatched to available execution units, resulting in the ability for several instructions to be executed simultaneously. In general, the more instructions a superscalar CPU is able to dispatch simultaneously to waiting execution units, the more instructions will be completed in a given cycle.

Most of the difficulty in the design of a superscalar CPU architecture lies in creating an effective dispatcher. The dispatcher needs to be able to quickly and correctly determine whether instructions can be executed in parallel, as well as dispatch them in such a way as to keep as many execution units busy as possible. This requires that the instruction pipeline is filled as often as possible and gives rise to the need in superscalar architectures for significant amounts of CPU cache. It also makes hazard-avoiding techniques like branch prediction, speculative execution, and out-of-order execution crucial to maintaining high levels of performance. By attempting to predict which branch (or path) a conditional instruction will take, the CPU can minimize the number of times that the entire pipeline must wait until a conditional instruction is completed. Speculative execution often provides modest performance increases by executing portions of code that may or may not be needed after a conditional operation completes. Out-of-order execution somewhat rearranges the order in which instructions are executed to reduce delays due to data dependencies.

In the case where a portion of the CPU is superscalar and part is not, the part which is not suffers a performance penalty due to scheduling stalls. The original Intel Pentium (P5) had two superscalar ALUs which could accept one instruction per clock each, but its FPU could not accept one instruction per clock. Thus the P5 was integer superscalar but not floating point superscalar. Intel's successor to the Pentium architecture, P6, added superscalar capabilities to its floating point features, and therefore afforded a significant increase in floating point instruction performance.

Both simple pipelining and superscalar design increase a CPU's ILP by allowing a single processor to complete execution of instructions at rates surpassing one instruction per cycle (IPC).[8] Most modern CPU designs are at least somewhat superscalar, and nearly all general purpose CPUs designed in the last decade are superscalar. In later years some of the emphasis in designing high-ILP computers has been moved out of the CPU's hardware and into its software interface, or ISA. The strategy of the very long instruction word (VLIW) causes some ILP to become implied directly by the software, reducing the amount of work the CPU must perform to boost ILP and thereby reducing the design's complexity.

Thread level parallelism

Another strategy commonly used to increase the parallelism of CPUs is to include the ability to run multiple threads (programs) at the same time. In general, high-TLP CPUs have been in use much longer than high-ILP ones. Many of the designs pioneered by Cray during the late 1970s and 1980s concentrated on TLP as their primary method of enabling enormous (for the time) computing capability. In fact, TLP in the form of multiple thread execution improvements was in use as early as the 1950s (Smotherman 2005). In the context of single processor design, the two main methodologies used to accomplish TLP are chip-level multiprocessing (CMP) and simultaneous multithreading (SMT). On a higher level, it is very common to build computers with multiple totally independent CPUs in arrangements like symmetric multiprocessing (SMP) and non-uniform memory access (NUMA).[9] While using very different means, all of these techniques accomplish the same goal: increasing the number of threads that the CPU(s) can run in parallel.

The CMP and SMP methods of parallelism are similar to one another and the most straightforward. These involve little more conceptually than the utilization of two or more complete and independent CPUs. In the case of CMP, multiple processor "cores" are included in the same package, sometimes on the very same integrated circuit.[10] SMP, on the other hand, includes multiple independent packages. NUMA is somewhat similar to SMP but uses a nonuniform memory access model. This is important for computers with many CPUs because each processor's access time to memory is quickly exhausted with SMP's shared memory model, resulting in significant delays due to CPUs waiting for memory. Therefore, NUMA is considered a much more scalable model, successfully allowing many more CPUs to be used in one computer than SMP can feasibly support. SMT differs somewhat from other TLP improvements in that it attempts to duplicate as few portions of the CPU as possible. While considered a TLP strategy, its implementation actually more resembles superscalar design, and indeed is often used in superscalar microprocessors (such as IBM's POWER5). Rather than duplicating the entire CPU, SMT designs only duplicate parts needed for instruction fetching, decoding, and dispatch, as well as things like general-purpose registers. This allows an SMT CPU to keep its execution units busy more often by providing them instructions from two different software threads. Again, this is very similar to the ILP superscalar method, but simultaneously executes instructions from multiple threads rather than executing multiple instructions from the same thread concurrently.

Data parallelism

A less common but increasingly important paradigm of CPUs (and indeed, computing in general) deals with vectors. The processors discussed earlier are all referred to as some type of scalar device.[11] As the name implies, vector processors deal with multiple pieces of data in the context of one instruction. This contrasts with scalar processors, which deal with one piece of data for every instruction. These two schemes of dealing with data are generally referred to as SISD (single instruction, single data) and SIMD (single instruction, multiple data), respectively. The great utility in creating CPUs that deal with vectors of data lies in optimizing tasks that tend to require the same operation (for example, a sum or a dot product) to be performed on a large set of data. Some classic examples of these types of tasks are multimedia applications (images, video, and sound), as well as many types of scientific and engineering tasks. Whereas a scalar CPU must complete the entire process of fetching, decoding, and executing each instruction and value in a set of data, a vector CPU can perform a single operation on a comparatively large set of data with one instruction. Of course, this is only possible when the application tends to require many steps which apply one operation to a large set of data.

Most early vector CPUs, such as the Cray-1, were associated almost exclusively with scientific research and cryptography applications. However, as multimedia has largely shifted to digital media, the need for some form of SIMD in general-purpose CPUs has become significant. Shortly after floating point execution units started to become commonplace to include in general-purpose processors, specifications for and implementations of SIMD execution units also began to appear for general-purpose CPUs. Some of these early SIMD specifications like Intel's MMX were integer-only. This proved to be a significant impediment for some software developers, since many of the applications that benefit from SIMD primarily deal with floating point numbers. Progressively, these early designs were refined and remade into some of the common, modern SIMD specifications, which are usually associated with one ISA. Some notable modern examples are Intel's SSE and the PowerPC-related AltiVec (also known as VMX).[12]

See also

References

- a b Amdahl, G. M., Blaauw, G. A., & Brooks, F. P. Jr. (1964). Architecture of the IBM System/360. IBM Research.

- a Brown, Jeffery (2005). Application-customized CPU design. IBM developerWorks. Retrieved on 2005-12-17.

- a Digital Equipment Corporation (November 1975). “LSI-11 Module Descriptions”, LSI-11, PDP-11/03 user's manual, 2nd edition. Maynard, Massachusetts: Digital Equipment Corporation, 4-3.

- a Garside, J. D., Furber, S. B., & Chung, S-H (1999). AMULET3 Revealed. University of Manchester Computer Science Department.

- Hennessy, John A.; Goldberg, David (1996). Computer Architecture: A Quantitative Approach. Morgan Kaufmann Publishers. ISBN 1-55860-329-8.

- a MIPS Technologies, Inc. (2005). MIPS32® Architecture For Programmers Volume II: The MIPS32® Instruction Set. MIPS Technologies, Inc..

- a Smotherman, Mark (2005). History of Multithreading. Retrieved on 2005-12-19.

- a von Neumann, John (1945). First Draft of a Report on the EDVAC. Moore School of Electrical Engineering, University of Pennsylvania.

- a b Weik, Martin H. (1961). A Third Survey of Domestic Electronic Digital Computing Systems. Ballistic Research Laboratories.

External links

- Microprocessor designers

- Advanced Micro Devices - Advanced Micro Devices, a designer of primarily x86-compatible personal computer CPUs.

- ARM Ltd - ARM Ltd, one of the few CPU designers that profits solely by licensing their designs rather than manufacturing them. ARM architecture microprocessors are among the most popular in the world for embedded applications.

- Freescale Semiconductor (formerly of Motorola) - Freescale Semiconductor, designer of several embedded and SoC PowerPC based processors.

- IBM Microelectronics - Microelectronics division of IBM, which is responsible for many POWER and PowerPC based designs, including many of the CPUs utilized in late video game consoles.

- Intel Corp - Intel, a maker of several notable CPU lines, including IA-32, IA-64, and XScale. Also a producer of various peripheral chips for use with their CPUs.

- MIPS Technologies - MIPS Technologies, developers of the MIPS architecture, a pioneer in RISC designs.

- Sun Microsystems - Sun Microsystems, developers of the SPARC architecture, a RISC design.

- Texas Instruments - Texas Instruments semiconductor division. Designs and manufactures several types of low power microcontrollers among their many other semiconductor products.

- Transmeta - Transmeta Corporation. Creators of low-power x86 compatibles like Crusoe and Efficeon.

- Further reading

- Processor Design: An Introduction - Detailed introduction to microprocessor design. Somewhat incomplete and outdated, but still worthwhile.

- How Microprocessors Work

- Pipelining: An Overview - Good introduction to and overview of CPU pipelining techniques by the staff of Ars Technica

- SIMD Architectures - Introduction to and explanation of SIMD, especially how it relates to personal computers. Also by Ars Technica

Notes

- ↑ Early CPUs were custom-designed for each new kind of computer. Custom processors gave way to mass-produced, general-purposes processors. This trend accelerated with the advent of the integrated circuit (IC), which allowed processors to become tiny (on the order of millimeters). Miniaturization and standardization led to additional uses, such as in personal digital assistants (PDA's).

- ↑ Some early computers like the Harvard Mark I did not support any kind of "jump" instruction, effectively limiting the complexity of the programs they could run. It is largely for this reason that these computers are often not considered to contain a CPU proper, despite their close similarity as stored program computers.

- ↑ The physical concept of voltage is an analog one by its nature, practically having an infinite range of possible values. For the purpose of physical representation of binary numbers, set ranges of voltages are defined as one or zero. These ranges are usually influenced by the operational parameters of the switching elements used to create the CPU, such as a transistor's threshold level.

- ↑ While a processor's integer size determines maximum integer ranges, this can (and often is) overcome using a combination of software and hardware techniques. By using additional memory, software can represent integers many magnitudes larger than the processor can. Sometimes the processor's ISA will even facilitate operations on integers larger that it can natively represent by providing instructions to make large integer arithmetic relatively quick. While dealing with large integers in software is somewhat slower than utilizing a wider integer size in the processor hardware, it is a reasonable trade-off where wider integers in hardware would be cost-prohibitive. See Arbitrary-precision arithmetic for more on purely software-supported, arbitrary-sized integers.

- ↑ In fact, processors include a combination of sequential logic and combinatorial logic. (See boolean logic)

- ↑ One notable late CPU design that uses clock gating is that of the IBM PowerPC-based Xbox 360. It utilizes extensive clock gating in order to reduce the power requirements of the aforementioned videogame console it is used in. (Brown 2005)

- ↑ Neither ILP nor TLP is inherently superior over the other; they are simply different means by which to increase CPU parallelism. As such, they both have advantages and disadvantages, which are often determined by the type of software that the processor is intended to run. High-TLP CPUs are often used in applications that lend themselves well to being split up into numerous smaller applications, so-called "embarrassingly parallel problems." Frequently, a computational problem that can be solved quickly with high TLP design strategies like SMP take significantly more time on high ILP devices like superscalar CPUs, and vice versa.

- ↑ Best-case scenario (or peak) IPC rates in very superscalar architectures are difficult to maintain since it is impossible to keep the instruction pipeline filled all the time. Therefore, in highly superscalar CPUs, average sustained IPC is often discussed rather than peak IPC.

- ↑ Even though SMP and NUMA are both referred to as "systems level" TLP strategies, both methods must still be supported by the CPU's design and implementation.

- ↑ While TLP methods have generally been in use longer than ILP methods, Chip-level multiprocessing is more or less only seen in later IC-based microprocessors. This is largely because the term itself is inapplicable to earlier discrete component devices and has only come into use recently.

For several years during the late 1990s and early 2000s, the focus in designing high performance general purpose CPUs was largely on highly superscalar IPC designs, such as the Intel Pentium 4. However, this trend seems to be reversing somewhat now as major general-purpose CPU designers switch back to less deeply pipelined high-TLP designs. This is evidenced by the proliferation of dual and multi core CMP designs and notably, Intel's newer designs resembling its less superscalar P6 architecture. Late designs in several processor families exhibit CMP, including the x86-64 Opteron and Athlon 64 X2, the SPARC UltraSPARC T1, IBM POWER4 and POWER5, as well as several video game console CPUs like the Xbox 360's triple-core PowerPC design. - ↑ Earlier the term scalar was used to compare the IPC (instructions per cycle) count afforded by various ILP methods. Here the term is used in the strictly mathematical sense to contrast with vectors. See scalar (mathematics) and vector (spatial).

- ↑ Although SSE/SSE2/SSE3 have superseded MMX in Intel's general purpose CPUs, later IA-32 designs still support MMX. This is usually accomplished by providing most of the MMX functionality with the same hardware that supports the much more expansive SSE instruction sets.